Most of us have developed our ideas of what artificial intelligence should look like from movies, television shows, and popular novels. When thinking about AI, our minds flash to images of robots masquerading as humans, as we saw in Spielberg’s film of the same name, or countless other films such as Blade Runner and the Alien movies. Or, we conjure up ideas of computers that think and speak like humans, such as the one who so famously told Dave “I’m sorry Dave, I’m afraid I can’t do that” in the film 2001.

Over the years, in our real life experiences, we’ve become accustomed to computers and other devices asking us questions, qualifying us via complicated phone menus, or helping us to find quick facts with the aid of assistants like Siri. We still enjoy the little 2D games like Super Smash Flash 2 and others but the next generation will be more demanding. We realize that the AI found in Hollywood is years away, and are realistic about the technology we have today and how it’s progressing. After all, making a piece of software that can think and act exactly like a human is a pretty tall order. Or is it?

The challenge for designers of the next generation of AI is to progress the field beyond where we are today, so it feels more like our visions of the future provided by TV, books and film. This will take time, and like everything else that evolves, will jump forward in spits and starts at times. One thing is sure, though: People will be less and less content with experiences where AI just picks from a list of predetermined responses. They will demand systems and products designed with more “machine-learned” responses. Users want to evolve from what today may be a good experience into what in the future will become known as a great experience.

What Makes Good AI?

AI can mean different things to different people. Each industry can perceive the term differently and have a different set of preconceived expectations. Some of the most commonly experienced AI programs are asymmetric products such as Facebook, Amazon, or Google, which allow a user to cut through large amounts of information almost effortlessly. These experiences are using “dumb” AI, though, and are not complex. For the tasks we use them for today, these kinds of AI can be described as “good enough.”

Symmetric AI takes this experience several steps further. Users search for the same information in both cases. The symmetric AI, however, tends to ask questions and gather other data such as facial expressions to judge the user’s intent. Much more input data is gleaned than with the asymmetric AI model. The result is an AI that is much more engaging and useful, personal, explorative, or intensive in gathering the desired end-result. This is far better than “good enough.”

Another kind of AI can almost be labeled as “too good.” This can happen in a variety of ways. For instance, the product uses strict, pre-determined responses for input and churns out a lot of results that the user must then wade through. Using these types of AIs doesn’t actually teach the user to learn or expand their experience in any way. Instead, it teaches users to misuse the bots in order to try and find a flaw in the algorithm. This type of AI also allows for only “good enough” results.

Because of these challenges, developing a good AI is a multi-faceted puzzle. A good AI isn’t perfect. It makes blunders but tries to avoid predictable patterns. Then, the AI uses these mistakes and learned patterns to adapt and provide variances in how it replies. The resulting product offers more value and entertainment for the user than an AI that merely answers with the same response time after time.

IPhone users can experience this for themselves. Just tell Siri that you love her, and she will give different responses, all of which are entertaining. Eventually, though, you’ll reach the end of her predetermined responses, and she’ll start to sound repetitive. At the end of the day, It’s better than hearing just one canned response, though.

Designing with Feeling

Every AI should be designed with several goals in mind. For starters, the AI should be fun. Second, the experience with the AI should be rewarding (in other words, it actually needs to do what you are asking it to), and lastly, the AI should not digress from the task at hand, either by design or by accident.

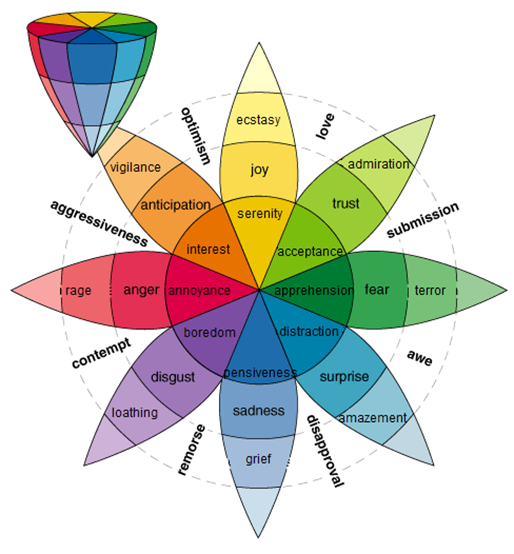

An aspect of AI design which is highly desirable, but not simple to create, could in fact be the most important of all design facets: Emotion. Emotion is the fine line which separates human interaction and computer interaction. Machines don’t feel emotions. They can fake them, but humans easily see through that. It’s possible, however, to design some personality into an AI that mimics emotion enough to create a more rewarding experience.

Learning is an emotional process. There’s the joy of discovery, the agony of defeat, and the shared experience of gaining new knowledge. AI should be in sync with these types of emotions to generate these feelings, acknowledge them, and even reward them at times. UX designers who can emulate these emotional connections will take us another step further into the types of AI experiences that we’ve longed for in popular culture since the first science fiction story was written, put onto the silver screen or broadcasted into our living rooms via our television sets.

What Makes AI Fun?

Predictability isn’t fun. It’s boring. That’s why good AI should be unpredictable. Otherwise, why interact with it if you already know how it will respond? Simple video game AI accomplishes this. A level boss in a good, modern-day first person shooter may have some predictable patterns, but it will never do exactly the same thing each and every time you restart the level.

This is a great improvement over arcade games from 30 years ago that had preset patterns. If someone was blessed with amazing memory, for example, he could play Mario Brothers blindfolded just by memorizing when to jump, stop or perform other actions. Today’s AI in video games is far better because it’s harder to predict and more difficult to beat. This makes it more of a challenge, which is more fun. In these cases, artificial intelligence is fun because it just feels, less…artificial.

What Makes AI rewarding?

When interacting with AI, we have a different set of expectations then we do when dealing with another human being. In other words, our expectations are lower. We don’t expect the AI to make us happy, for instance. And we certainly don’t think that we can make the AI happy. But what if we could?

Designing a happiness index into an AI would allow for joy to be created by interactions. When two humans enjoy interacting with each other, it makes them happy. The same could happen with a properly designed AI. Once the user is able to visually see or hear when an AI is happy, it will improve the interaction as well. This can be accomplished with a visual or verbal cue that represents the AI’s current level of happiness on a happiness meter, for example.

One caution when designing with emotion in mind is to take into account how much an AI wants to succeed. An AI aims to maximise the results of a session every time. If this meant making a poor move, then it would do so regardless, as long as that move contributed to the success of the session. Because of this, there needs to be some type of “fairness” built into the program that makes users less likely to experience just such a situation.

How to do AI the Right Way

It’s impossible to predict with any amount of certainty how to design the perfect AI. There are years yet to wait and many advancements yet to come that will help in this endeavor. A few things are certain, though. The enjoyment level of interaction with well designed AI will be high. It will elicit emotions and have a distinct personality that enhances engagement. The AI will be entertaining if it contains all of these elements, and it will also feel more like a “being” than a bot. That’s the key here. AI will not feel artificial — but instead will feel genuine, clever, unpredictable, and as a result, more exciting, rewarding and best of all: More “real.”

orginaly posted here: https://medium.com/@mgcreativefactor/a-great-ai-experience-shouldnt-even-feel-like-an-ai-experience-at-all-f0a14aee270#.3098cwsjj

June 12, 2016 at 7:47 pm

A.I.’s realm is fascinating, nevertheless it seems that the A.I. developers each time make more efforts to make this A.I. more human-like. Is this A.I. developement just for fun, or because of the lack of trust that humans still have with one another?