Artificial intelligence debate is flawed at the core. Here is one quick fix regarding the deeply flawed lose-lose AI car-crash greater-good scenario.

I am addressing research flaws of:

Autonomous Vehicles Need Experimental Ethics: Are We Ready for Utilitarian Cars? By Jean-François Bonnefon, Azim Shariff, Iyad Rahwan (Submitted on 12 Oct 2015).

Answer is to spread harm equally:

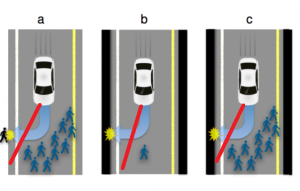

See how I added red lines to the image by Bonnefon et al. Harm should be minimized for everyone in the accident, which means if the choice is between hitting two people the car aims for the middle of the two. If the choice is hitting pedestrians or hitting a wall the car should do both (graze the wall and pedestrian/s), with a view to balancing harm based upon defenses of the car versus the pedestrian, which would mean more impact (but not full impact) with the wall.

IS THE SCENARIO REAL?

This is the most important question, cutting to the root of flawed debate on AI self-driving cars.

How common, if at all existent, is the supposed lose-lose situation regarding car-driving (car crashes)? I am skeptical the situation exists, or if it exists perhaps the rarity is so low we can discount it? Sadly the moral dilemma of a lose-lose car crash is often raised regarding artificial intelligence self-driving cars.

What we actually need is statistics regarding how often this situation occurs in car crashes. Perhaps it never occurs? Maybe it is merely a unreasonable hypothetical, similar to:

What if God exists, surely we should all pray just in case?

The Slate wrote (22 March 2016): “The senators questioned the panel about how cars would decide whom to strike in an inevitable lose-lose situation.”

IFLS wrote (26 Oct 2015): “Picture the scene: You’re in a self-driving car and, after turning a corner, find that you are on course for an unavoidable collision with a group of 10 people in the road with walls on either side. Should the car swerve to the side into the wall, likely seriously injuring or killing you, its sole occupant, and saving the group? Or should it make every attempt to stop, knowing full well it will hit the group of people while keeping you safe?”

Technology Review wrote (22 Oct 2015): “Bonnefon and co are seeking to find a way through this ethical dilemma by gauging public opinion. Their idea is that the public is much more likely to go along with a scenario that aligns with their own views.”

Google results provide no answers to the frequency for the hypothetical lose-lose scenario:

https://www.google.com/search?q=lose+lose+car+crash

Is there a technical term for this type of crash?

We need facts not wild imaginings.

* hero image used from http://www.valuewalk.com/wp-content/uploads/2015/12/Baidu-Self-Driving-Car.jpeg

March 22, 2016 at 11:12 pm

The only meaningful thing to be said in such situations is that by adding another intelligent member in the whole family of road “gladiators” is to add more meaningful ways to killing us!

March 23, 2016 at 9:11 pm

The route to the supermarket from our rural area winds through a suburban residential area posted at 50kph where there are four stop signs. I adhere to the posted limit and stop at the stop signs. Often the following vehicle tailgates, and evidently that I stop at the stop signs comes as a complete surprise. I eagerly await being followed by a Google car!

March 24, 2016 at 10:20 pm

as long as I can sleep on my way to work…